Also, for the first time, the real-time phase extraction with AIA is achieved by using a normal NVIDIA RTX 2080 Ti GPU, i.e., the proposed gAIA only takes 26.55 ms to extract phase from 13 frames of fringe patterns with 2048 × 2048 pixels per frame. Without scarifying the phase extraction accuracy, the gAIA achieves 500 × speedup comparing with the sequential implementation on a single-core-CPU, and 10 × speedup comparing with the state-of-the-art partial GPU implementation which has a potential convergence issue. In this paper, based on the detailed analysis of the algorithm’s structure, a fully parallelized GPU-based AIA (gAIA) is proposed for the first time.

This problem is severer when both the pixel number and the frame number are large for high resolution and accuracy, restricting AIA’s wide application. However, these iterations make the AIA much slower than traditional phase-shifting algorithms. Among them, the advanced iterative algorithm (AIA) can accurately extract phase from fringe patterns with random unknown phase-shifts by iteratively estimating the phase and phase-shifts. Phase-extraction is important in various fields of optical metrology, for which, many phase-shifting algorithms have been developed.

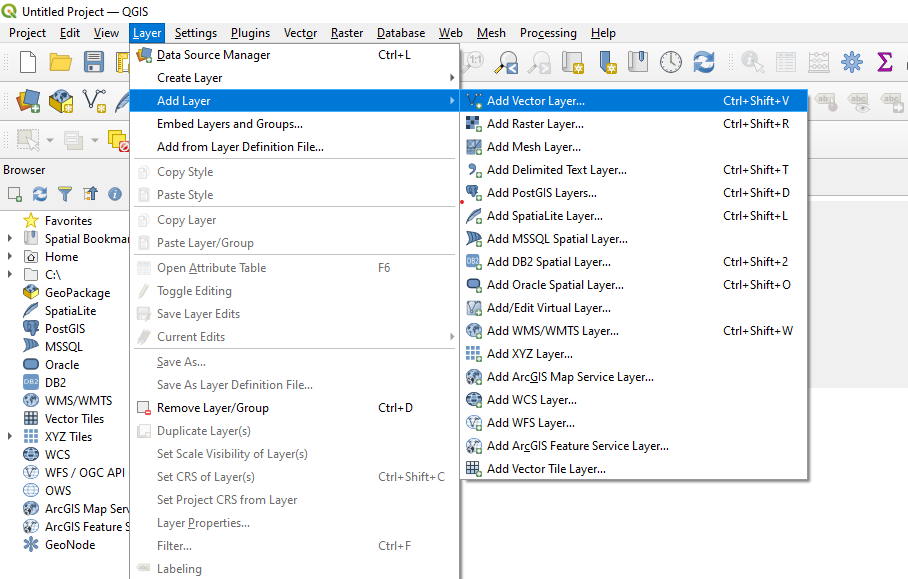

For example, a 2-D kernel named some_kernel should be launched as per Fig. Also note that only the numbers of 1-D blocks and threads can be directly written in the ">." For higher dimen- sions, they should be configured using the dim3(x,y,z) struct. Instead, 64, 128, and 256 are the most commonly used in practice. However, due to the limits of registers, L1 cache, and shared memory mentioned in Section 2.2, we usually do not approach this limit number. ) for a better speed performance, and a maximum of 1024 threads are allowed to be allocated to each block. It is also worth mentioning that the number of threads per block is preferred to be set as powers of 2 (i.e., 2 i, i = 1, 2, 3. For example, if the size of the vectors is n = 257, then (n + 256 -1)/256 = 2 blocks will be used. It indicates that in case n is not a multiple of 256, we are still guaranteed to use just enough blocks to add all of the elements. The number of blocks is calculated as (n + 256 − 1)/256, which is equivalent to the C/C++ ceiling operation ceil(n/256.0). ,an), where n1 and n2 are the number of blocks and the number of threads per block, respectively and a1,a2. CUDA kernel is launched in the format some_kernel>(a1,a2. Finally, the inner-subset sums, i.e., R m ¼. Second, as mentioned in Section 4.1, IC-GN processes all the subsets independently, which provides a straightforward pointwise pattern: every CUDA block is assigned one subset, and all the threads within the block are responsible for the calculations for that particular subset. Similarly, the interpolation coefficient LUT includes neighboring operations (Fig. First, the reference image gradient ∇R is calculated using the tiling pattern (Section 2.8). All three of the common parallel pat- terns introduced in Section 1.2 can be extracted from the IC-GN algorithm. 21 Extraction of the parallelizable parts. Also, in real applications, to achieve a high sub- pixel accuracy, the bicubic interpolation or the bicubic spline interpolation schemes are widely employed, in which the 16 interpolation coefficients at subpixel locations can be precomputed based on the intensity values at the sur- rounding integer pixels as well as their gradients (point C in Fig. Also note that it is often a good practice to use the h_ and the d_ to indicate host and device variables. Other CUDA functions can be found in Ref. Note that the size used in these functions should be in bytes. The functions cudaMalloc() and cudaFree() are used to allocate and deallocate device memory, respectively data transfer to/from device memory is carried out by the single cudaMemcpy() function, with the third parameter indicating the direction of the transfer. The CUDA functions are used to configure and control the runtime behaviors of CUDA and can only be called and executed in the host. Memory and data transfer operations are realized through CUDA functions. 6, CUDA manages the entire program in the host, which mainly contains three parts: device memory allocation/deallocation, data transfer between the host and the device, and kernel launch. The CUDA parallel implementation is demonstrated in Figs. 5, where the addition is iter- ated by a for loop. The familiar sequential implementation of the vector addition written in C++ is shown in Fig. section introduces the functions, kernel launch, and predefined parameters of the CUDA programming model with an example of the implementation of the simple vector addition shown in Fig.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed